User feedback on a live mobile app

Why do I need to gather feedback through my live mobile app?

An honest look at your latest project

So, you’ve launched the first version of your app. After months of hard work designing and developing your latest project, you’re convinced that nothing could possibly be wrong with your work. The way you display information to the user is crystal clear, right? There’s no chance anybody will ever be remotely confused by your implementation. Your conversion rate will surely be 100% or higher… you get the idea. Clearly, this will not be the case.

The thing is, even with the best of intentions, you’re gonna make a few mistakes. Some of the assumptions you made when designing your screens are eventually going to be proved wrong. This happens to us all the time and we have to acknowledge that it is a part of the process.

At Brightec, we user test our initial design concepts to gather as much input as we can in the early stages of a project. This gains us a LOT of feedback in a very short space of time, but even though we test our ideas, it is a different thing entirely to watch thousands of end-users reacting to our work once it’s out in the big wide world.

The problem with stubbornly sticking to your original assumptions is that you’ll probably be able to twist the cold statistics from your app analytics to support your theory. Even if you’re wrong. A plain conversion percentage rate does not tell you the full story. There could be hundreds (if not thousands) of reasons why that figure is high or low.

My point is, we need to learn not to settle for our initial idea of what we could achieve. We need real humans to feedback to us and tell us where we are doing really well, and where we could improve.

How do I capture feedback from my active user base?

There are plenty of ways to go about this, but for this post I’ll focus in on one: in-line feedback prompts.

Now I’m not talking about shouty, pop-up, in-your-face alerts. I’m talking about nicely designed solutions that meet the user where they’re experiencing their biggest issue, or biggest success.

We’ve been developing an app called Trainsplit for a little under two years now. We’re really proud of it for the way it's helped thousands of users to save thousands of pounds on their train fares. You can check it out on iOS and Android.

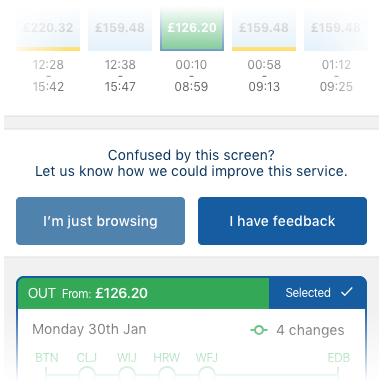

We knew that the most significant screen in that app is the Search Results screen. That’s the place where users decide whether or not Trainsplit can find them a train fare they like. We identified early on that this screen is where the battle is won or lost. We tested our design concept at our offices with real users before the initial release but once it was live, we quickly realised that there was much more to be understood about how people were using it.

We knew from our analytics that the average time on this screen from our users was 30 seconds. We also knew that, on average, users interacted with the graph six times before moving forwards or backwards in the app.

With this baseline understanding, we set up some triggers in the code to show a feedback dialog. If a user was active on the screen for more than double the average length of time, we could safely assume that they were either confused by their results, or that they were really enjoying browsing the options we were providing them.

In both cases, we want to hear from this person in order to improve the service for everybody. The same is true for the number of interactions. If a user is tapping around on lots of options, we also want their thoughts too.

If a user triggers the feedback dialog, we do not block their progress. They can still use the app as they please. But if they do respond to our message, they are taken to an email client, where they can vocalise their point of view to us. This also allows us to pre-populate the email with potentially helpful information such as their app version, and their device type, which can prove vital when troubleshooting any issues which arrive.

What now - what to do with this new found information?

We’ve set up our feedback loop to go to a specific email address which we monitor on a weekly basis. We record the reasons for customer feedback in a spreadsheet, which allows us to easily see the predominant issues that our users are experiencing.

Having an ordered list of the major issues which real people are experiencing is absolutely priceless. It is so much more valuable than a simple number on an analytics dashboard. When we have meetings with the client about upcoming feature priorities, we can base our decisions on user feedback, as opposed to personal preference.

In addition to the above, we have set up our parameters for when to display the feedback dialog to handle Firebase remote config. This means that if user behaviour changes, we can adjust our parameters so that we don’t annoy people by displaying the dialog too early, or miss information by displaying it too late.

We saw this happen as our user base grew over the first few months of release. The average time and interaction count changed quite dramatically, as we had a different proportion of new users and returning users. So we changed our parameters to adapt.

Another release, which has been directly influenced by this user feedback method, is being reviewed by Apple as I write this. We know it will solve some of the issues people have raised. But we also know there will be more feedback, and that user behaviour will continue to change. With this honest and humble approach to our work, we can vouch whole-heartedly for gathering user feedback through a live app. It is fundamentally improving our client’s product on an ongoing basis.

We love to learn by studying and sharing the knowledge of our business and the projects we work on. Click here to read more about User Testing at Brightec.

Looking for something else?

Search over 450 blog posts from our team

Want to hear more?

Subscribe to our monthly digest of blogs to stay in the loop and come with us on our journey to make things better!